Riemannian stochastic variance reduced gradient algorithm on manifolds

Authors

H. Kasai, H. Sato, and B. Mishra

Abstract

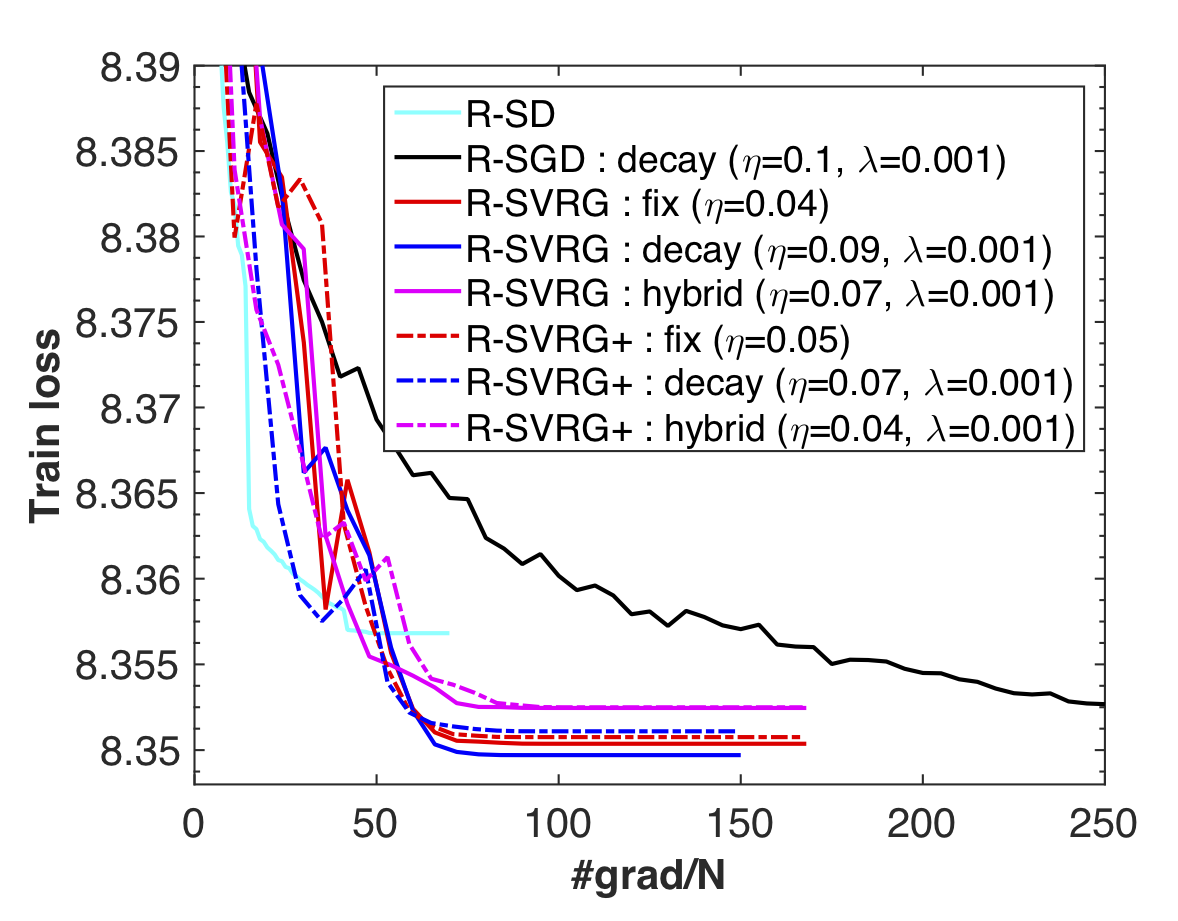

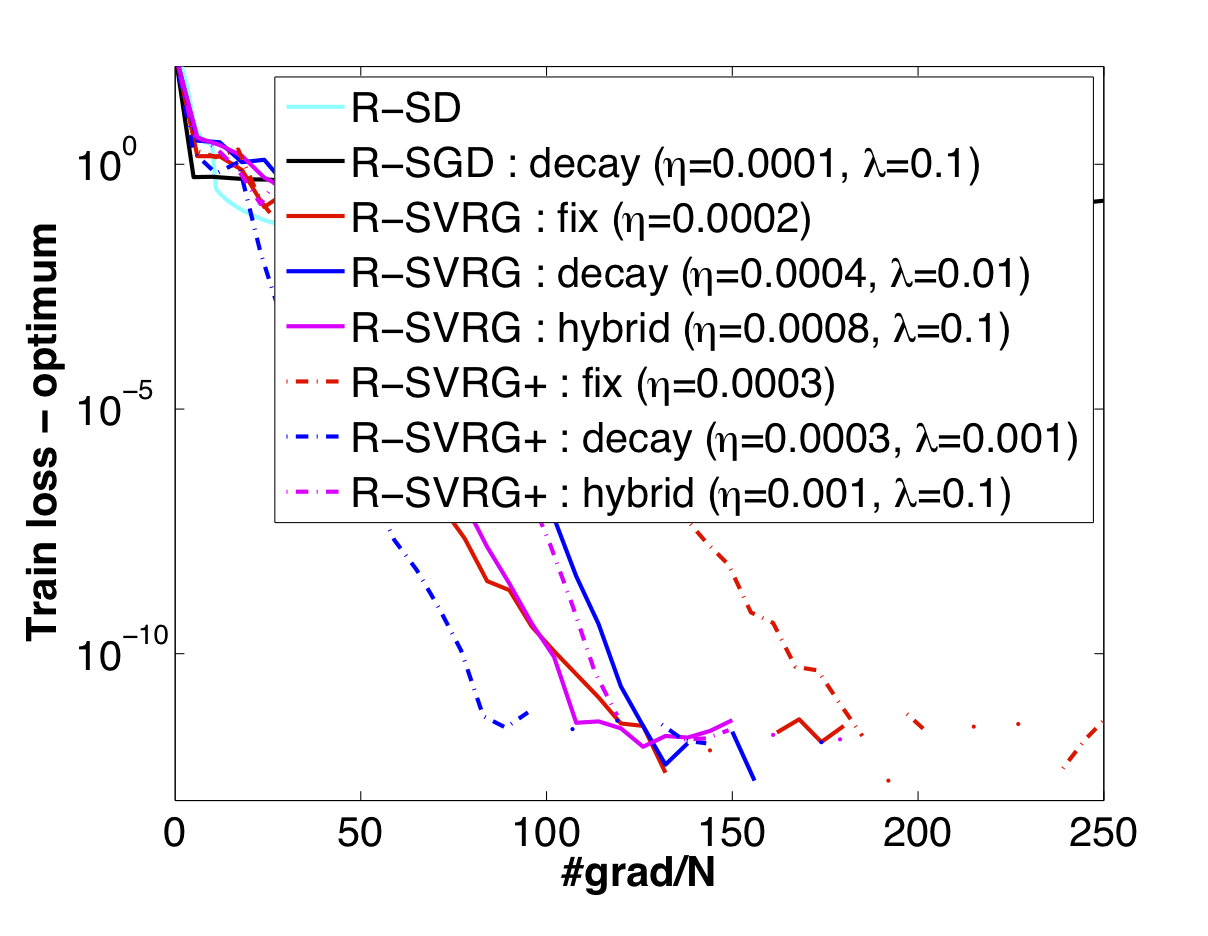

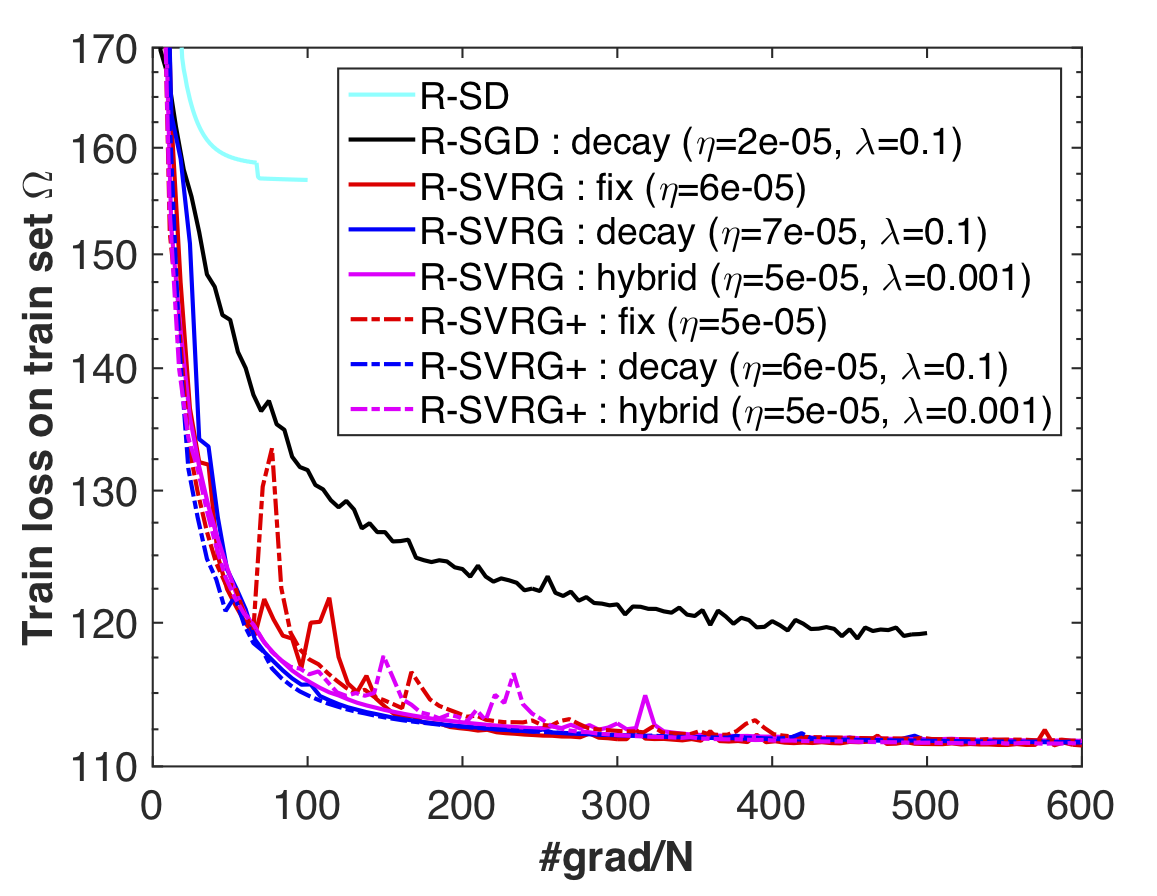

Stochastic variance reduction algorithms have recently become popular for minimizing the average of a large, but finite, number of loss functions. In this paper, we propose a novel Riemannian extension of the Euclidean stochastic variance reduced gradient algorithm (R-SVRG) to a compact manifold search space. To this end, we show the developments on the Grassmann manifold. The key challenges of averaging, addition, and subtraction of multiple gradients are addressed with notions like logarithm mapping and parallel translation of vectors on the Grassmann manifold. We present a global convergence analysis of the proposed algorithm with a decay step-size and a local convergence rate analysis under a fixed step-size with some natural assumptions. The proposed algorithm is applied on a number of problems on the Grassmann manifold like principal components analysis, low-rank matrix completion, and the Karcher mean computation. In all these cases, the proposed algorithm outperforms the standard Riemannian stochastic gradient descent algorithm.

Downloads

- Status: Technical report, 2016. A shorter version has been accepted to 9th NeurIPS workshop on optimization for machine learning (OPT2016) to be held at Barcelona.

- Paper: [arXiv:1702.05594] [arXiv:1605.07367].

- Talk: Hiroyuki Kasai presented the work at ICCOPT 2016, Tokyo. The slides are here.

- Matlab code: Code at [RSVRG_24May2016.zip].